feat: Add Official Microsoft & Gemini Skills (845+ Total)

🚀 Impact Significantly expands the capabilities of **Antigravity Awesome Skills** by integrating official skill collections from **Microsoft** and **Google Gemini**. This update increases the total skill count to **845+**, making the library even more comprehensive for AI coding assistants. ✨ Key Changes 1. New Official Skills - **Microsoft Skills**: Added a massive collection of official skills from [microsoft/skills](https://github.com/microsoft/skills). - Includes Azure, .NET, Python, TypeScript, and Semantic Kernel skills. - Preserves the original directory structure under `skills/official/microsoft/`. - Includes plugin skills from the `.github/plugins` directory. - **Gemini Skills**: Added official Gemini API development skills under `skills/gemini-api-dev/`. 2. New Scripts & Tooling - **`scripts/sync_microsoft_skills.py`**: A robust synchronization script that: - Clones the official Microsoft repository. - Preserves the original directory heirarchy. - Handles symlinks and plugin locations. - Generates attribution metadata. - **`scripts/tests/inspect_microsoft_repo.py`**: Debug tool to inspect the remote repository structure. - **`scripts/tests/test_comprehensive_coverage.py`**: Verification script to ensure 100% of skills are captured during sync. 3. Core Improvements - **`scripts/generate_index.py`**: Enhanced frontmatter parsing to safely handle unquoted values containing `@` symbols and commas (fixing issues with some Microsoft skill descriptions). - **`package.json`**: Added `sync:microsoft` and `sync:all-official` scripts for easy maintenance. 4. Documentation - Updated `README.md` to reflect the new skill counts (845+) and added Microsoft/Gemini to the provider list. - Updated `CATALOG.md` and `skills_index.json` with the new skills. 🧪 Verification - Ran `scripts/tests/test_comprehensive_coverage.py` to verify all Microsoft skills are detected. - Validated `generate_index.py` fixes by successfully indexing the new skills.

This commit is contained in:

127

skills/gemini-api-dev/SKILL.md

Normal file

127

skills/gemini-api-dev/SKILL.md

Normal file

@@ -0,0 +1,127 @@

|

||||

---

|

||||

name: gemini-api-dev

|

||||

description: Use this skill when building applications with Gemini models, Gemini API, working with multimodal content (text, images, audio, video), implementing function calling, using structured outputs, or needing current model specifications. Covers SDK usage (google-genai for Python, @google/genai for JavaScript/TypeScript), model selection, and API capabilities.

|

||||

---

|

||||

|

||||

# Gemini API Development Skill

|

||||

|

||||

## Overview

|

||||

|

||||

The Gemini API provides access to Google's most advanced AI models. Key capabilities include:

|

||||

- **Text generation** - Chat, completion, summarization

|

||||

- **Multimodal understanding** - Process images, audio, video, and documents

|

||||

- **Function calling** - Let the model invoke your functions

|

||||

- **Structured output** - Generate valid JSON matching your schema

|

||||

- **Code execution** - Run Python code in a sandboxed environment

|

||||

- **Context caching** - Cache large contexts for efficiency

|

||||

- **Embeddings** - Generate text embeddings for semantic search

|

||||

|

||||

## Current Gemini Models

|

||||

|

||||

- `gemini-3-pro-preview`: 1M tokens, complex reasoning, coding, research

|

||||

- `gemini-3-flash-preview`: 1M tokens, fast, balanced performance, multimodal

|

||||

- `gemini-3-pro-image-preview`: 65k / 32k tokens, image generation and editing

|

||||

|

||||

|

||||

> [!IMPORTANT]

|

||||

> Models like `gemini-2.5-*`, `gemini-2.0-*`, `gemini-1.5-*` are legacy and deprecated. Use the new models above. Your knowledge is outdated.

|

||||

|

||||

## SDKs

|

||||

|

||||

- **Python**: `google-genai` install with `pip install google-genai`

|

||||

- **JavaScript/TypeScript**: `@google/genai` install with `npm install @google/genai`

|

||||

- **Go**: `google.golang.org/genai` install with `go get google.golang.org/genai`

|

||||

|

||||

> [!WARNING]

|

||||

> Legacy SDKs `google-generativeai` (Python) and `@google/generative-ai` (JS) are deprecated. Migrate to the new SDKs above urgently by following the Migration Guide.

|

||||

|

||||

## Quick Start

|

||||

|

||||

### Python

|

||||

```python

|

||||

from google import genai

|

||||

|

||||

client = genai.Client()

|

||||

response = client.models.generate_content(

|

||||

model="gemini-3-flash-preview",

|

||||

contents="Explain quantum computing"

|

||||

)

|

||||

print(response.text)

|

||||

```

|

||||

|

||||

### JavaScript/TypeScript

|

||||

```typescript

|

||||

import { GoogleGenAI } from "@google/genai";

|

||||

|

||||

const ai = new GoogleGenAI({});

|

||||

const response = await ai.models.generateContent({

|

||||

model: "gemini-3-flash-preview",

|

||||

contents: "Explain quantum computing"

|

||||

});

|

||||

console.log(response.text);

|

||||

```

|

||||

|

||||

### Go

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"log"

|

||||

"google.golang.org/genai"

|

||||

)

|

||||

|

||||

func main() {

|

||||

ctx := context.Background()

|

||||

client, err := genai.NewClient(ctx, nil)

|

||||

if err != nil {

|

||||

log.Fatal(err)

|

||||

}

|

||||

|

||||

resp, err := client.Models.GenerateContent(ctx, "gemini-3-flash-preview", genai.Text("Explain quantum computing"), nil)

|

||||

if err != nil {

|

||||

log.Fatal(err)

|

||||

}

|

||||

|

||||

fmt.Println(resp.Text)

|

||||

}

|

||||

```

|

||||

|

||||

## API spec (source of truth)

|

||||

|

||||

**Always use the latest REST API discovery spec as the source of truth for API definitions** (request/response schemas, parameters, methods). Fetch the spec when implementing or debugging API integration:

|

||||

|

||||

- **v1beta** (default): `https://generativelanguage.googleapis.com/$discovery/rest?version=v1beta`

|

||||

Use this unless the integration is explicitly pinned to v1. The official SDKs (google-genai, @google/genai, google.golang.org/genai) target v1beta.

|

||||

- **v1**: `https://generativelanguage.googleapis.com/$discovery/rest?version=v1`

|

||||

Use only when the integration is specifically set to v1.

|

||||

|

||||

When in doubt, use v1beta. Refer to the spec for exact field names, types, and supported operations.

|

||||

|

||||

## How to use the Gemini API

|

||||

|

||||

For detailed API documentation, fetch from the official docs index:

|

||||

|

||||

**llms.txt URL**: `https://ai.google.dev/gemini-api/docs/llms.txt`

|

||||

|

||||

This index contains links to all documentation pages in `.md.txt` format. Use web fetch tools to:

|

||||

|

||||

1. Fetch `llms.txt` to discover available documentation pages

|

||||

2. Fetch specific pages (e.g., `https://ai.google.dev/gemini-api/docs/function-calling.md.txt`)

|

||||

|

||||

### Key Documentation Pages

|

||||

|

||||

> [!IMPORTANT]

|

||||

> Those are not all the documentation pages. Use the `llms.txt` index to discover available documentation pages

|

||||

|

||||

- [Models](https://ai.google.dev/gemini-api/docs/models.md.txt)

|

||||

- [Google AI Studio quickstart](https://ai.google.dev/gemini-api/docs/ai-studio-quickstart.md.txt)

|

||||

- [Nano Banana image generation](https://ai.google.dev/gemini-api/docs/image-generation.md.txt)

|

||||

- [Function calling with the Gemini API](https://ai.google.dev/gemini-api/docs/function-calling.md.txt)

|

||||

- [Structured outputs](https://ai.google.dev/gemini-api/docs/structured-output.md.txt)

|

||||

- [Text generation](https://ai.google.dev/gemini-api/docs/text-generation.md.txt)

|

||||

- [Image understanding](https://ai.google.dev/gemini-api/docs/image-understanding.md.txt)

|

||||

- [Embeddings](https://ai.google.dev/gemini-api/docs/embeddings.md.txt)

|

||||

- [Interactions API](https://ai.google.dev/gemini-api/docs/interactions.md.txt)

|

||||

- [SDK migration guide](https://ai.google.dev/gemini-api/docs/migrate.md.txt)

|

||||

783

skills/official/microsoft/ATTRIBUTION.json

Normal file

783

skills/official/microsoft/ATTRIBUTION.json

Normal file

@@ -0,0 +1,783 @@

|

||||

{

|

||||

"source": "microsoft/skills",

|

||||

"repository": "https://github.com/microsoft/skills",

|

||||

"license": "MIT",

|

||||

"synced_skills": 129,

|

||||

"skills": [

|

||||

{

|

||||

"path": "java/foundry/formrecognizer",

|

||||

"name": "formrecognizer",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-formrecognizer-java"

|

||||

},

|

||||

{

|

||||

"path": "java/foundry/vision-imageanalysis",

|

||||

"name": "vision-imageanalysis",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-vision-imageanalysis-java"

|

||||

},

|

||||

{

|

||||

"path": "java/foundry/voicelive",

|

||||

"name": "voicelive",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-voicelive-java"

|

||||

},

|

||||

{

|

||||

"path": "java/foundry/contentsafety",

|

||||

"name": "contentsafety",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-contentsafety-java"

|

||||

},

|

||||

{

|

||||

"path": "java/foundry/projects",

|

||||

"name": "projects",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-projects-java"

|

||||

},

|

||||

{

|

||||

"path": "java/foundry/anomalydetector",

|

||||

"name": "anomalydetector",

|

||||

"category": "java/foundry",

|

||||

"source": ".github/skills/azure-ai-anomalydetector-java"

|

||||

},

|

||||

{

|

||||

"path": "java/monitoring/ingestion",

|

||||

"name": "ingestion",

|

||||

"category": "java/monitoring",

|

||||

"source": ".github/skills/azure-monitor-ingestion-java"

|

||||

},

|

||||

{

|

||||

"path": "java/monitoring/query",

|

||||

"name": "query",

|

||||

"category": "java/monitoring",

|

||||

"source": ".github/skills/azure-monitor-query-java"

|

||||

},

|

||||

{

|

||||

"path": "java/monitoring/opentelemetry-exporter",

|

||||

"name": "opentelemetry-exporter",

|

||||

"category": "java/monitoring",

|

||||

"source": ".github/skills/azure-monitor-opentelemetry-exporter-java"

|

||||

},

|

||||

{

|

||||

"path": "java/integration/appconfiguration",

|

||||

"name": "appconfiguration",

|

||||

"category": "java/integration",

|

||||

"source": ".github/skills/azure-appconfiguration-java"

|

||||

},

|

||||

{

|

||||

"path": "java/communication/common",

|

||||

"name": "common",

|

||||

"category": "java/communication",

|

||||

"source": ".github/skills/azure-communication-common-java"

|

||||

},

|

||||

{

|

||||

"path": "java/communication/callingserver",

|

||||

"name": "callingserver",

|

||||

"category": "java/communication",

|

||||

"source": ".github/skills/azure-communication-callingserver-java"

|

||||

},

|

||||

{

|

||||

"path": "java/communication/sms",

|

||||

"name": "sms",

|

||||

"category": "java/communication",

|

||||

"source": ".github/skills/azure-communication-sms-java"

|

||||

},

|

||||

{

|

||||

"path": "java/communication/callautomation",

|

||||

"name": "callautomation",

|

||||

"category": "java/communication",

|

||||

"source": ".github/skills/azure-communication-callautomation-java"

|

||||

},

|

||||

{

|

||||

"path": "java/communication/chat",

|

||||

"name": "chat",

|

||||

"category": "java/communication",

|

||||

"source": ".github/skills/azure-communication-chat-java"

|

||||

},

|

||||

{

|

||||

"path": "java/compute/batch",

|

||||

"name": "batch",

|

||||

"category": "java/compute",

|

||||

"source": ".github/skills/azure-compute-batch-java"

|

||||

},

|

||||

{

|

||||

"path": "java/entra/azure-identity",

|

||||

"name": "azure-identity",

|

||||

"category": "java/entra",

|

||||

"source": ".github/skills/azure-identity-java"

|

||||

},

|

||||

{

|

||||

"path": "java/entra/keyvault-keys",

|

||||

"name": "keyvault-keys",

|

||||

"category": "java/entra",

|

||||

"source": ".github/skills/azure-security-keyvault-keys-java"

|

||||

},

|

||||

{

|

||||

"path": "java/entra/keyvault-secrets",

|

||||

"name": "keyvault-secrets",

|

||||

"category": "java/entra",

|

||||

"source": ".github/skills/azure-security-keyvault-secrets-java"

|

||||

},

|

||||

{

|

||||

"path": "java/messaging/eventgrid",

|

||||

"name": "eventgrid",

|

||||

"category": "java/messaging",

|

||||

"source": ".github/skills/azure-eventgrid-java"

|

||||

},

|

||||

{

|

||||

"path": "java/messaging/webpubsub",

|

||||

"name": "webpubsub",

|

||||

"category": "java/messaging",

|

||||

"source": ".github/skills/azure-messaging-webpubsub-java"

|

||||

},

|

||||

{

|

||||

"path": "java/messaging/eventhubs",

|

||||

"name": "eventhubs",

|

||||

"category": "java/messaging",

|

||||

"source": ".github/skills/azure-eventhub-java"

|

||||

},

|

||||

{

|

||||

"path": "java/data/tables",

|

||||

"name": "tables",

|

||||

"category": "java/data",

|

||||

"source": ".github/skills/azure-data-tables-java"

|

||||

},

|

||||

{

|

||||

"path": "java/data/cosmos",

|

||||

"name": "cosmos",

|

||||

"category": "java/data",

|

||||

"source": ".github/skills/azure-cosmos-java"

|

||||

},

|

||||

{

|

||||

"path": "java/data/blob",

|

||||

"name": "blob",

|

||||

"category": "java/data",

|

||||

"source": ".github/skills/azure-storage-blob-java"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/speech-to-text-rest",

|

||||

"name": "speech-to-text-rest",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-speech-to-text-rest-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/transcription",

|

||||

"name": "transcription",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-transcription-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/vision-imageanalysis",

|

||||

"name": "vision-imageanalysis",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-vision-imageanalysis-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/contentunderstanding",

|

||||

"name": "contentunderstanding",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-contentunderstanding-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/voicelive",

|

||||

"name": "voicelive",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-voicelive-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/agent-framework",

|

||||

"name": "agent-framework",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/agent-framework-azure-ai-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/contentsafety",

|

||||

"name": "contentsafety",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-contentsafety-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/agents-v2",

|

||||

"name": "agents-v2",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/agents-v2-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/translation-document",

|

||||

"name": "translation-document",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-translation-document-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/translation-text",

|

||||

"name": "translation-text",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-translation-text-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/textanalytics",

|

||||

"name": "textanalytics",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-textanalytics-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/ml",

|

||||

"name": "ml",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-ml-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/projects",

|

||||

"name": "projects",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-ai-projects-py"

|

||||

},

|

||||

{

|

||||

"path": "python/foundry/search-documents",

|

||||

"name": "search-documents",

|

||||

"category": "python/foundry",

|

||||

"source": ".github/skills/azure-search-documents-py"

|

||||

},

|

||||

{

|

||||

"path": "python/monitoring/opentelemetry",

|

||||

"name": "opentelemetry",

|

||||

"category": "python/monitoring",

|

||||

"source": ".github/skills/azure-monitor-opentelemetry-py"

|

||||

},

|

||||

{

|

||||

"path": "python/monitoring/ingestion",

|

||||

"name": "ingestion",

|

||||

"category": "python/monitoring",

|

||||

"source": ".github/skills/azure-monitor-ingestion-py"

|

||||

},

|

||||

{

|

||||

"path": "python/monitoring/query",

|

||||

"name": "query",

|

||||

"category": "python/monitoring",

|

||||

"source": ".github/skills/azure-monitor-query-py"

|

||||

},

|

||||

{

|

||||

"path": "python/monitoring/opentelemetry-exporter",

|

||||

"name": "opentelemetry-exporter",

|

||||

"category": "python/monitoring",

|

||||

"source": ".github/skills/azure-monitor-opentelemetry-exporter-py"

|

||||

},

|

||||

{

|

||||

"path": "python/m365/m365-agents",

|

||||

"name": "m365-agents",

|

||||

"category": "python/m365",

|

||||

"source": ".github/skills/m365-agents-py"

|

||||

},

|

||||

{

|

||||

"path": "python/integration/appconfiguration",

|

||||

"name": "appconfiguration",

|

||||

"category": "python/integration",

|

||||

"source": ".github/skills/azure-appconfiguration-py"

|

||||

},

|

||||

{

|

||||

"path": "python/integration/apimanagement",

|

||||

"name": "apimanagement",

|

||||

"category": "python/integration",

|

||||

"source": ".github/skills/azure-mgmt-apimanagement-py"

|

||||

},

|

||||

{

|

||||

"path": "python/integration/apicenter",

|

||||

"name": "apicenter",

|

||||

"category": "python/integration",

|

||||

"source": ".github/skills/azure-mgmt-apicenter-py"

|

||||

},

|

||||

{

|

||||

"path": "python/compute/fabric",

|

||||

"name": "fabric",

|

||||

"category": "python/compute",

|

||||

"source": ".github/skills/azure-mgmt-fabric-py"

|

||||

},

|

||||

{

|

||||

"path": "python/compute/botservice",

|

||||

"name": "botservice",

|

||||

"category": "python/compute",

|

||||

"source": ".github/skills/azure-mgmt-botservice-py"

|

||||

},

|

||||

{

|

||||

"path": "python/compute/containerregistry",

|

||||

"name": "containerregistry",

|

||||

"category": "python/compute",

|

||||

"source": ".github/skills/azure-containerregistry-py"

|

||||

},

|

||||

{

|

||||

"path": "python/entra/azure-identity",

|

||||

"name": "azure-identity",

|

||||

"category": "python/entra",

|

||||

"source": ".github/skills/azure-identity-py"

|

||||

},

|

||||

{

|

||||

"path": "python/entra/keyvault",

|

||||

"name": "keyvault",

|

||||

"category": "python/entra",

|

||||

"source": ".github/skills/azure-keyvault-py"

|

||||

},

|

||||

{

|

||||

"path": "python/messaging/eventgrid",

|

||||

"name": "eventgrid",

|

||||

"category": "python/messaging",

|

||||

"source": ".github/skills/azure-eventgrid-py"

|

||||

},

|

||||

{

|

||||

"path": "python/messaging/servicebus",

|

||||

"name": "servicebus",

|

||||

"category": "python/messaging",

|

||||

"source": ".github/skills/azure-servicebus-py"

|

||||

},

|

||||

{

|

||||

"path": "python/messaging/webpubsub-service",

|

||||

"name": "webpubsub-service",

|

||||

"category": "python/messaging",

|

||||

"source": ".github/skills/azure-messaging-webpubsubservice-py"

|

||||

},

|

||||

{

|

||||

"path": "python/messaging/eventhub",

|

||||

"name": "eventhub",

|

||||

"category": "python/messaging",

|

||||

"source": ".github/skills/azure-eventhub-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/tables",

|

||||

"name": "tables",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-data-tables-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/cosmos",

|

||||

"name": "cosmos",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-cosmos-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/blob",

|

||||

"name": "blob",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-storage-blob-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/datalake",

|

||||

"name": "datalake",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-storage-file-datalake-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/cosmos-db",

|

||||

"name": "cosmos-db",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-cosmos-db-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/queue",

|

||||

"name": "queue",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-storage-queue-py"

|

||||

},

|

||||

{

|

||||

"path": "python/data/fileshare",

|

||||

"name": "fileshare",

|

||||

"category": "python/data",

|

||||

"source": ".github/skills/azure-storage-file-share-py"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/voicelive",

|

||||

"name": "voicelive",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-ai-voicelive-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/contentsafety",

|

||||

"name": "contentsafety",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-ai-contentsafety-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/document-intelligence",

|

||||

"name": "document-intelligence",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-ai-document-intelligence-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/projects",

|

||||

"name": "projects",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-ai-projects-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/search-documents",

|

||||

"name": "search-documents",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-search-documents-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/foundry/translation",

|

||||

"name": "translation",

|

||||

"category": "typescript/foundry",

|

||||

"source": ".github/skills/azure-ai-translation-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/monitoring/opentelemetry",

|

||||

"name": "opentelemetry",

|

||||

"category": "typescript/monitoring",

|

||||

"source": ".github/skills/azure-monitor-opentelemetry-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/frontend/zustand-store",

|

||||

"name": "zustand-store",

|

||||

"category": "typescript/frontend",

|

||||

"source": ".github/skills/zustand-store-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/frontend/frontend-ui-dark",

|

||||

"name": "frontend-ui-dark",

|

||||

"category": "typescript/frontend",

|

||||

"source": ".github/skills/frontend-ui-dark-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/frontend/react-flow-node",

|

||||

"name": "react-flow-node",

|

||||

"category": "typescript/frontend",

|

||||

"source": ".github/skills/react-flow-node-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/m365/m365-agents",

|

||||

"name": "m365-agents",

|

||||

"category": "typescript/m365",

|

||||

"source": ".github/skills/m365-agents-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/integration/appconfiguration",

|

||||

"name": "appconfiguration",

|

||||

"category": "typescript/integration",

|

||||

"source": ".github/skills/azure-appconfiguration-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/compute/playwright",

|

||||

"name": "playwright",

|

||||

"category": "typescript/compute",

|

||||

"source": ".github/skills/azure-microsoft-playwright-testing-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/entra/azure-identity",

|

||||

"name": "azure-identity",

|

||||

"category": "typescript/entra",

|

||||

"source": ".github/skills/azure-identity-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/entra/keyvault-keys",

|

||||

"name": "keyvault-keys",

|

||||

"category": "typescript/entra",

|

||||

"source": ".github/skills/azure-keyvault-keys-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/entra/keyvault-secrets",

|

||||

"name": "keyvault-secrets",

|

||||

"category": "typescript/entra",

|

||||

"source": ".github/skills/azure-keyvault-secrets-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/messaging/servicebus",

|

||||

"name": "servicebus",

|

||||

"category": "typescript/messaging",

|

||||

"source": ".github/skills/azure-servicebus-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/messaging/webpubsub",

|

||||

"name": "webpubsub",

|

||||

"category": "typescript/messaging",

|

||||

"source": ".github/skills/azure-web-pubsub-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/messaging/eventhubs",

|

||||

"name": "eventhubs",

|

||||

"category": "typescript/messaging",

|

||||

"source": ".github/skills/azure-eventhub-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/data/cosmosdb",

|

||||

"name": "cosmosdb",

|

||||

"category": "typescript/data",

|

||||

"source": ".github/skills/azure-cosmos-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/data/blob",

|

||||

"name": "blob",

|

||||

"category": "typescript/data",

|

||||

"source": ".github/skills/azure-storage-blob-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/data/postgres",

|

||||

"name": "postgres",

|

||||

"category": "typescript/data",

|

||||

"source": ".github/skills/azure-postgres-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/data/queue",

|

||||

"name": "queue",

|

||||

"category": "typescript/data",

|

||||

"source": ".github/skills/azure-storage-queue-ts"

|

||||

},

|

||||

{

|

||||

"path": "typescript/data/fileshare",

|

||||

"name": "fileshare",

|

||||

"category": "typescript/data",

|

||||

"source": ".github/skills/azure-storage-file-share-ts"

|

||||

},

|

||||

{

|

||||

"path": "rust/entra/azure-keyvault-keys-rust",

|

||||

"name": "azure-keyvault-keys-rust",

|

||||

"category": "rust/entra",

|

||||

"source": ".github/skills/azure-keyvault-keys-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/entra/azure-keyvault-secrets-rust",

|

||||

"name": "azure-keyvault-secrets-rust",

|

||||

"category": "rust/entra",

|

||||

"source": ".github/skills/azure-keyvault-secrets-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/entra/azure-identity-rust",

|

||||

"name": "azure-identity-rust",

|

||||

"category": "rust/entra",

|

||||

"source": ".github/skills/azure-identity-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/entra/azure-keyvault-certificates-rust",

|

||||

"name": "azure-keyvault-certificates-rust",

|

||||

"category": "rust/entra",

|

||||

"source": ".github/skills/azure-keyvault-certificates-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/messaging/azure-eventhub-rust",

|

||||

"name": "azure-eventhub-rust",

|

||||

"category": "rust/messaging",

|

||||

"source": ".github/skills/azure-eventhub-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/data/azure-cosmos-rust",

|

||||

"name": "azure-cosmos-rust",

|

||||

"category": "rust/data",

|

||||

"source": ".github/skills/azure-cosmos-rust"

|

||||

},

|

||||

{

|

||||

"path": "rust/data/azure-storage-blob-rust",

|

||||

"name": "azure-storage-blob-rust",

|

||||

"category": "rust/data",

|

||||

"source": ".github/skills/azure-storage-blob-rust"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/voicelive",

|

||||

"name": "voicelive",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-ai-voicelive-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/document-intelligence",

|

||||

"name": "document-intelligence",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-ai-document-intelligence-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/openai",

|

||||

"name": "openai",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-ai-openai-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/weightsandbiases",

|

||||

"name": "weightsandbiases",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-mgmt-weightsandbiases-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/projects",

|

||||

"name": "projects",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-ai-projects-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/foundry/search-documents",

|

||||

"name": "search-documents",

|

||||

"category": "dotnet/foundry",

|

||||

"source": ".github/skills/azure-search-documents-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/monitoring/applicationinsights",

|

||||

"name": "applicationinsights",

|

||||

"category": "dotnet/monitoring",

|

||||

"source": ".github/skills/azure-mgmt-applicationinsights-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/m365/m365-agents",

|

||||

"name": "m365-agents",

|

||||

"category": "dotnet/m365",

|

||||

"source": ".github/skills/m365-agents-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/integration/apimanagement",

|

||||

"name": "apimanagement",

|

||||

"category": "dotnet/integration",

|

||||

"source": ".github/skills/azure-mgmt-apimanagement-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/integration/apicenter",

|

||||

"name": "apicenter",

|

||||

"category": "dotnet/integration",

|

||||

"source": ".github/skills/azure-mgmt-apicenter-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/compute/playwright",

|

||||

"name": "playwright",

|

||||

"category": "dotnet/compute",

|

||||

"source": ".github/skills/azure-resource-manager-playwright-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/compute/durabletask",

|

||||

"name": "durabletask",

|

||||

"category": "dotnet/compute",

|

||||

"source": ".github/skills/azure-resource-manager-durabletask-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/compute/botservice",

|

||||

"name": "botservice",

|

||||

"category": "dotnet/compute",

|

||||

"source": ".github/skills/azure-mgmt-botservice-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/entra/azure-identity",

|

||||

"name": "azure-identity",

|

||||

"category": "dotnet/entra",

|

||||

"source": ".github/skills/azure-identity-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/entra/authentication-events",

|

||||

"name": "authentication-events",

|

||||

"category": "dotnet/entra",

|

||||

"source": ".github/skills/microsoft-azure-webjobs-extensions-authentication-events-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/entra/keyvault",

|

||||

"name": "keyvault",

|

||||

"category": "dotnet/entra",

|

||||

"source": ".github/skills/azure-security-keyvault-keys-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/general/maps",

|

||||

"name": "maps",

|

||||

"category": "dotnet/general",

|

||||

"source": ".github/skills/azure-maps-search-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/messaging/eventgrid",

|

||||

"name": "eventgrid",

|

||||

"category": "dotnet/messaging",

|

||||

"source": ".github/skills/azure-eventgrid-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/messaging/servicebus",

|

||||

"name": "servicebus",

|

||||

"category": "dotnet/messaging",

|

||||

"source": ".github/skills/azure-servicebus-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/messaging/eventhubs",

|

||||

"name": "eventhubs",

|

||||

"category": "dotnet/messaging",

|

||||

"source": ".github/skills/azure-eventhub-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/redis",

|

||||

"name": "redis",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-resource-manager-redis-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/postgresql",

|

||||

"name": "postgresql",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-resource-manager-postgresql-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/mysql",

|

||||

"name": "mysql",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-resource-manager-mysql-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/cosmosdb",

|

||||

"name": "cosmosdb",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-resource-manager-cosmosdb-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/fabric",

|

||||

"name": "fabric",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-mgmt-fabric-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/data/sql",

|

||||

"name": "sql",

|

||||

"category": "dotnet/data",

|

||||

"source": ".github/skills/azure-resource-manager-sql-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/partner/arize-ai-observability-eval",

|

||||

"name": "arize-ai-observability-eval",

|

||||

"category": "dotnet/partner",

|

||||

"source": ".github/skills/azure-mgmt-arizeaiobservabilityeval-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "dotnet/partner/mongodbatlas",

|

||||

"name": "mongodbatlas",

|

||||

"category": "dotnet/partner",

|

||||

"source": ".github/skills/azure-mgmt-mongodbatlas-dotnet"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-page-writer",

|

||||

"name": "wiki-page-writer",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-page-writer"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-vitepress",

|

||||

"name": "wiki-vitepress",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-vitepress"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-researcher",

|

||||

"name": "wiki-researcher",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-researcher"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-qa",

|

||||

"name": "wiki-qa",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-qa"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-onboarding",

|

||||

"name": "wiki-onboarding",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-onboarding"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-architect",

|

||||

"name": "wiki-architect",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-architect"

|

||||

},

|

||||

{

|

||||

"path": "plugins/wiki-changelog",

|

||||

"name": "wiki-changelog",

|

||||

"category": "plugins",

|

||||

"source": ".github/plugins/deep-wiki/skills/wiki-changelog"

|

||||

}

|

||||

],

|

||||

"note": "Symlinks resolved and content copied for compatibility. Original directory structure preserved."

|

||||

}

|

||||

21

skills/official/microsoft/LICENSE

Normal file

21

skills/official/microsoft/LICENSE

Normal file

@@ -0,0 +1,21 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) Microsoft Corporation.

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE

|

||||

714

skills/official/microsoft/README-MICROSOFT.md

Normal file

714

skills/official/microsoft/README-MICROSOFT.md

Normal file

@@ -0,0 +1,714 @@

|

||||

# Agent Skills

|

||||

|

||||

[](https://github.com/microsoft/skills/actions/workflows/test-harness.yml)

|

||||

[](https://github.com/microsoft/skills/actions/workflows/skill-evaluation.yml)

|

||||

[](https://skills.sh/microsoft/skills)

|

||||

[](https://microsoft.github.io/skills/#documentation)

|

||||

|

||||

> [!NOTE]

|

||||

> **Work in Progress** — This repository is under active development. More skills are being added, existing skills are being updated to use the latest SDK patterns, and tests are being expanded to ensure quality. Contributions welcome!

|

||||

|

||||

Skills, custom agents, AGENTS.md templates, and MCP configurations for AI coding agents working with Azure SDKs and Microsoft AI Foundry.

|

||||

|

||||

> **Blog post:** [Context-Driven Development: Agent Skills for Microsoft Foundry and Azure](https://devblogs.microsoft.com/all-things-azure/context-driven-development-agent-skills-for-microsoft-foundry-and-azure/)

|

||||

|

||||

> **🔍 Skill Explorer:** [Browse all 131 skills with 1-click install](https://microsoft.github.io/skills/)

|

||||

|

||||

## Quick Start

|

||||

|

||||

```bash

|

||||

npx skills add microsoft/skills

|

||||

```

|

||||

|

||||

Select the skills you need from the wizard. Skills are installed to your chosen agent's directory (e.g., `.github/skills/` for GitHub Copilot) and symlinked if you use multiple agents.

|

||||

|

||||

<details>

|

||||

<summary>Alternative installation methods</summary>

|

||||

|

||||

**Manual installation (git clone)**

|

||||

|

||||

```bash

|

||||

# Clone and copy specific skills

|

||||

git clone https://github.com/microsoft/skills.git

|

||||

cp -r agent-skills/.github/skills/azure-cosmos-db-py your-project/.github/skills/

|

||||

|

||||

# Or use symlinks for multi-project setups

|

||||

ln -s /path/to/agent-skills/.github/skills/mcp-builder /path/to/your-project/.github/skills/mcp-builder

|

||||

|

||||

# Share skills across different agent configs in the same repo

|

||||

ln -s ../.github/skills .opencode/skills

|

||||

ln -s ../.github/skills .claude/skills

|

||||

```

|

||||

|

||||

</details>

|

||||

|

||||

---

|

||||

|

||||

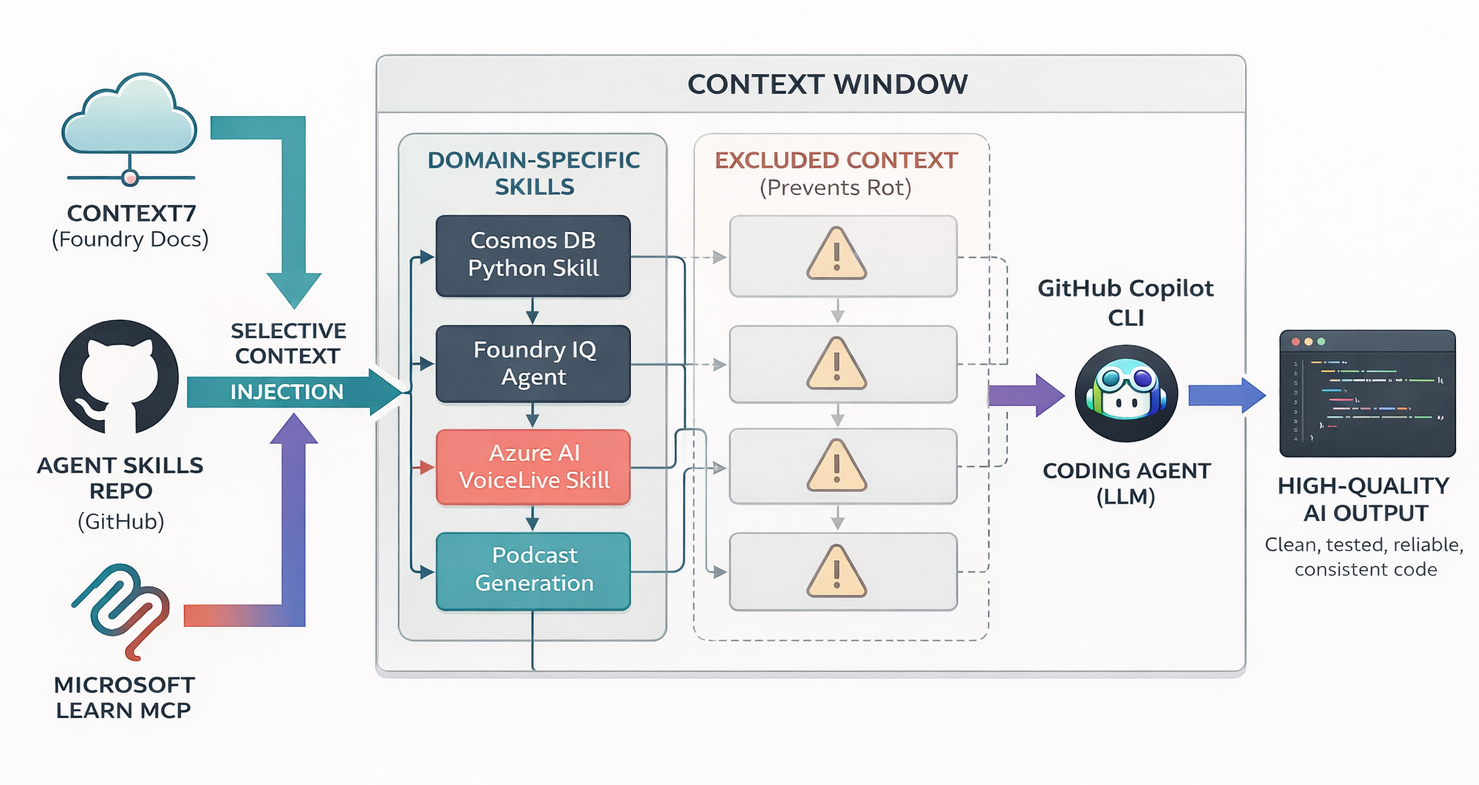

Coding agents like [Copilot CLI](https://github.com/features/copilot/cli) are powerful, but they lack domain knowledge about your SDKs. The patterns are already in their weights from pretraining. All you need is the right activation context to surface them.

|

||||

|

||||

> [!IMPORTANT]

|

||||

> **Use skills selectively.** Loading all skills causes context rot: diluted attention, wasted tokens, conflated patterns. Only copy skills essential for your current project.

|

||||

|

||||

---

|

||||

|

||||

|

||||

|

||||

---

|

||||

|

||||

## What's Inside

|

||||

|

||||

| Resource | Description |

|

||||

|----------|-------------|

|

||||

| **[125 Skills](#skill-catalog)** | Domain-specific knowledge for Azure SDK and Foundry development |

|

||||

| **[Plugins](#plugins)** | Installable plugin packages (deep-wiki, and more) |

|

||||

| **[Custom Agents](#agents)** | Role-specific agents (backend, frontend, infrastructure, planner) |

|

||||

| **[AGENTS.md](AGENTS.md)** | Template for configuring agent behavior in your projects |

|

||||

| **[MCP Configs](#mcp-servers)** | Pre-configured servers for docs, GitHub, browser automation |

|

||||

| **[Documentation](https://microsoft.github.io/skills/#documentation)** | Repo docs and usage guides |

|

||||

|

||||

---

|

||||

|

||||

## Skill Catalog

|

||||

|

||||

> 131 skills in `.github/skills/` — flat structure with language suffixes for automatic discovery

|

||||

|

||||

| Language | Count | Suffix |

|

||||

|----------|-------|--------|

|

||||

| [Core](#core) | 6 | — |

|

||||

| [Python](#python) | 41 | `-py` |

|

||||

| [.NET](#net) | 28 | `-dotnet` |

|

||||

| [TypeScript](#typescript) | 24 | `-ts` |

|

||||

| [Java](#java) | 25 | `-java` |

|

||||

| [Rust](#rust) | 7 | `-rust` |

|

||||

|

||||

---

|

||||

|

||||

### Core

|

||||

|

||||

> 6 skills — tooling, infrastructure, language-agnostic

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azd-deployment](.github/skills/azd-deployment/) | Deploy to Azure Container Apps with Azure Developer CLI (azd). Bicep infrastructure, remote builds, multi-service deployments. |

|

||||

| [copilot-sdk](.github/skills/copilot-sdk/) | Build applications powered by GitHub Copilot using the Copilot SDK. Session management, custom tools, streaming, hooks, MCP servers, BYOK. |

|

||||

| [github-issue-creator](.github/skills/github-issue-creator/) | Convert raw notes, error logs, or screenshots into structured GitHub issues. |

|

||||

| [mcp-builder](.github/skills/mcp-builder/) | Build MCP servers for LLM tool integration. Python (FastMCP), Node/TypeScript, or C#/.NET. |

|

||||

| [podcast-generation](.github/skills/podcast-generation/) | Generate podcast-style audio with Azure OpenAI Realtime API. Full-stack React + FastAPI + WebSocket. |

|

||||

| [skill-creator](.github/skills/skill-creator/) | Guide for creating effective skills for AI coding agents. |

|

||||

|

||||

---

|

||||

|

||||

### Python

|

||||

|

||||

> 41 skills • suffix: `-py`

|

||||

|

||||

<details>

|

||||

<summary><strong>Foundry & AI</strong> (7 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [agent-framework-azure-ai-py](.github/skills/agent-framework-azure-ai-py/) | Agent Framework SDK — persistent agents, hosted tools, MCP servers, streaming. |

|

||||

| [azure-ai-contentsafety-py](.github/skills/azure-ai-contentsafety-py/) | Content Safety SDK — detect harmful content in text/images with multi-severity classification. |

|

||||

| [azure-ai-contentunderstanding-py](.github/skills/azure-ai-contentunderstanding-py/) | Content Understanding SDK — multimodal extraction from documents, images, audio, video. |

|

||||

| [azure-ai-evaluation-py](.github/skills/azure-ai-evaluation-py/) | Evaluation SDK — quality, safety, and custom evaluators for generative AI apps. |

|

||||

| [agents-v2-py](.github/skills/agents-v2-py/) | Foundry Agents SDK — container-based agents with ImageBasedHostedAgentDefinition, custom images, tools. |

|

||||

| [azure-ai-projects-py](.github/skills/azure-ai-projects-py/) | High-level Foundry SDK — project client, versioned agents, evals, connections, OpenAI-compatible clients. |

|

||||

| [azure-search-documents-py](.github/skills/azure-search-documents-py/) | AI Search SDK — vector search, hybrid search, semantic ranking, indexing, skillsets. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>M365</strong> (1 skill)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [m365-agents-py](.github/skills/m365-agents-py/) | Microsoft 365 Agents SDK — aiohttp hosting, AgentApplication routing, streaming, Copilot Studio client. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>AI Services</strong> (8 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-ai-ml-py](.github/skills/azure-ai-ml-py/) | ML SDK v2 — workspaces, jobs, models, datasets, compute, pipelines. |

|

||||

| [azure-ai-textanalytics-py](.github/skills/azure-ai-textanalytics-py/) | Text Analytics — sentiment, entities, key phrases, PII detection, healthcare NLP. |

|

||||

| [azure-ai-transcription-py](.github/skills/azure-ai-transcription-py/) | Transcription SDK — real-time and batch speech-to-text with timestamps, diarization. |

|

||||

| [azure-ai-translation-document-py](.github/skills/azure-ai-translation-document-py/) | Document Translation — batch translate Word, PDF, Excel with format preservation. |

|

||||

| [azure-ai-translation-text-py](.github/skills/azure-ai-translation-text-py/) | Text Translation — real-time translation, transliteration, language detection. |

|

||||

| [azure-ai-vision-imageanalysis-py](.github/skills/azure-ai-vision-imageanalysis-py/) | Vision SDK — captions, tags, objects, OCR, people detection, smart cropping. |

|

||||

| [azure-ai-voicelive-py](.github/skills/azure-ai-voicelive-py/) | Voice Live SDK — real-time bidirectional voice AI with WebSocket, VAD, avatars. |

|

||||

| [azure-speech-to-text-rest-py](.github/skills/azure-speech-to-text-rest-py/) | Speech to Text REST API — transcribe short audio (≤60 seconds) via HTTP without Speech SDK. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Data & Storage</strong> (7 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-cosmos-db-py](.github/skills/azure-cosmos-db-py/) | Cosmos DB patterns — FastAPI service layer, dual auth, partition strategies, TDD. |

|

||||

| [azure-cosmos-py](.github/skills/azure-cosmos-py/) | Cosmos DB SDK — document CRUD, queries, containers, globally distributed data. |

|

||||

| [azure-data-tables-py](.github/skills/azure-data-tables-py/) | Tables SDK — NoSQL key-value storage, entity CRUD, batch operations. |

|

||||

| [azure-storage-blob-py](.github/skills/azure-storage-blob-py/) | Blob Storage — upload, download, list, containers, lifecycle management. |

|

||||

| [azure-storage-file-datalake-py](.github/skills/azure-storage-file-datalake-py/) | Data Lake Gen2 — hierarchical file systems, big data analytics. |

|

||||

| [azure-storage-file-share-py](.github/skills/azure-storage-file-share-py/) | File Share — SMB file shares, directories, cloud file operations. |

|

||||

| [azure-storage-queue-py](.github/skills/azure-storage-queue-py/) | Queue Storage — reliable message queuing, task distribution. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Messaging & Events</strong> (4 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-eventgrid-py](.github/skills/azure-eventgrid-py/) | Event Grid — publish events, CloudEvents, event-driven architectures. |

|

||||

| [azure-eventhub-py](.github/skills/azure-eventhub-py/) | Event Hubs — high-throughput streaming, producers, consumers, checkpointing. |

|

||||

| [azure-messaging-webpubsubservice-py](.github/skills/azure-messaging-webpubsubservice-py/) | Web PubSub — real-time messaging, WebSocket connections, pub/sub. |

|

||||

| [azure-servicebus-py](.github/skills/azure-servicebus-py/) | Service Bus — queues, topics, subscriptions, enterprise messaging. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Entra</strong> (2 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-identity-py](.github/skills/azure-identity-py/) | Identity SDK — DefaultAzureCredential, managed identity, service principals. |

|

||||

| [azure-keyvault-py](.github/skills/azure-keyvault-py/) | Key Vault — secrets, keys, and certificates management. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Monitoring</strong> (4 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-monitor-ingestion-py](.github/skills/azure-monitor-ingestion-py/) | Monitor Ingestion — send custom logs via Logs Ingestion API. |

|

||||

| [azure-monitor-opentelemetry-exporter-py](.github/skills/azure-monitor-opentelemetry-exporter-py/) | OpenTelemetry Exporter — low-level export to Application Insights. |

|

||||

| [azure-monitor-opentelemetry-py](.github/skills/azure-monitor-opentelemetry-py/) | OpenTelemetry Distro — one-line App Insights setup with auto-instrumentation. |

|

||||

| [azure-monitor-query-py](.github/skills/azure-monitor-query-py/) | Monitor Query — query Log Analytics workspaces and Azure metrics. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Integration & Management</strong> (5 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-appconfiguration-py](.github/skills/azure-appconfiguration-py/) | App Configuration — centralized config, feature flags, dynamic settings. |

|

||||

| [azure-containerregistry-py](.github/skills/azure-containerregistry-py/) | Container Registry — manage container images, artifacts, repositories. |

|

||||

| [azure-mgmt-apicenter-py](.github/skills/azure-mgmt-apicenter-py/) | API Center — API inventory, metadata, governance. |

|

||||

| [azure-mgmt-apimanagement-py](.github/skills/azure-mgmt-apimanagement-py/) | API Management — APIM services, APIs, products, policies. |

|

||||

| [azure-mgmt-botservice-py](.github/skills/azure-mgmt-botservice-py/) | Bot Service — create and manage Azure Bot resources. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Patterns & Frameworks</strong> (3 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-mgmt-fabric-py](.github/skills/azure-mgmt-fabric-py/) | Fabric Management — Microsoft Fabric capacities and resources. |

|

||||

| [fastapi-router-py](.github/skills/fastapi-router-py/) | FastAPI routers — CRUD operations, auth dependencies, response models. |

|

||||

| [pydantic-models-py](.github/skills/pydantic-models-py/) | Pydantic patterns — Base, Create, Update, Response, InDB model variants. |

|

||||

|

||||

</details>

|

||||

|

||||

---

|

||||

|

||||

### .NET

|

||||

|

||||

> 29 skills • suffix: `-dotnet`

|

||||

|

||||

<details>

|

||||

<summary><strong>Foundry & AI</strong> (6 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-ai-document-intelligence-dotnet](.github/skills/azure-ai-document-intelligence-dotnet/) | Document Intelligence — extract text, tables from invoices, receipts, IDs, forms. |

|

||||

| [azure-ai-openai-dotnet](.github/skills/azure-ai-openai-dotnet/) | Azure OpenAI — chat, embeddings, image generation, audio, assistants. |

|

||||

| [azure-ai-projects-dotnet](.github/skills/azure-ai-projects-dotnet/) | AI Projects SDK — Foundry project client, agents, connections, evals. |

|

||||

| [azure-ai-voicelive-dotnet](.github/skills/azure-ai-voicelive-dotnet/) | Voice Live — real-time voice AI with bidirectional WebSocket. |

|

||||

| [azure-mgmt-weightsandbiases-dotnet](.github/skills/azure-mgmt-weightsandbiases-dotnet/) | Weights & Biases — ML experiment tracking via Azure Marketplace. |

|

||||

| [azure-search-documents-dotnet](.github/skills/azure-search-documents-dotnet/) | AI Search — full-text, vector, semantic, hybrid search. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>M365</strong> (1 skill)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [m365-agents-dotnet](.github/skills/m365-agents-dotnet/) | Microsoft 365 Agents SDK — ASP.NET Core hosting, AgentApplication routing, Copilot Studio client. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Data & Storage</strong> (6 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-mgmt-fabric-dotnet](.github/skills/azure-mgmt-fabric-dotnet/) | Fabric ARM — provision, scale, suspend/resume Fabric capacities. |

|

||||

| [azure-resource-manager-cosmosdb-dotnet](.github/skills/azure-resource-manager-cosmosdb-dotnet/) | Cosmos DB ARM — create accounts, databases, containers, RBAC. |

|

||||

| [azure-resource-manager-mysql-dotnet](.github/skills/azure-resource-manager-mysql-dotnet/) | MySQL Flexible Server — servers, databases, firewall, HA. |

|

||||

| [azure-resource-manager-postgresql-dotnet](.github/skills/azure-resource-manager-postgresql-dotnet/) | PostgreSQL Flexible Server — servers, databases, firewall, HA. |

|

||||

| [azure-resource-manager-redis-dotnet](.github/skills/azure-resource-manager-redis-dotnet/) | Redis ARM — cache instances, firewall, geo-replication. |

|

||||

| [azure-resource-manager-sql-dotnet](.github/skills/azure-resource-manager-sql-dotnet/) | SQL ARM — servers, databases, elastic pools, failover groups. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Messaging</strong> (3 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-eventgrid-dotnet](.github/skills/azure-eventgrid-dotnet/) | Event Grid — publish events, CloudEvents, EventGridEvents. |

|

||||

| [azure-eventhub-dotnet](.github/skills/azure-eventhub-dotnet/) | Event Hubs — high-throughput streaming, producers, processors. |

|

||||

| [azure-servicebus-dotnet](.github/skills/azure-servicebus-dotnet/) | Service Bus — queues, topics, sessions, dead letter handling. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Entra</strong> (3 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-identity-dotnet](.github/skills/azure-identity-dotnet/) | Identity SDK — DefaultAzureCredential, managed identity, service principals. |

|

||||

| [azure-security-keyvault-keys-dotnet](.github/skills/azure-security-keyvault-keys-dotnet/) | Key Vault Keys — key creation, rotation, encrypt/decrypt, sign/verify. |

|

||||

| [microsoft-azure-webjobs-extensions-authentication-events-dotnet](.github/skills/microsoft-azure-webjobs-extensions-authentication-events-dotnet/) | Entra Auth Events — custom claims, token enrichment, attribute collection. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Compute & Integration</strong> (6 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-maps-search-dotnet](.github/skills/azure-maps-search-dotnet/) | Azure Maps — geocoding, routing, map tiles, weather. |

|

||||

| [azure-mgmt-apicenter-dotnet](.github/skills/azure-mgmt-apicenter-dotnet/) | API Center — API inventory, governance, versioning, discovery. |

|

||||

| [azure-mgmt-apimanagement-dotnet](.github/skills/azure-mgmt-apimanagement-dotnet/) | API Management ARM — APIM services, APIs, products, policies. |

|

||||

| [azure-mgmt-botservice-dotnet](.github/skills/azure-mgmt-botservice-dotnet/) | Bot Service ARM — bot resources, channels (Teams, DirectLine). |

|

||||

| [azure-resource-manager-durabletask-dotnet](.github/skills/azure-resource-manager-durabletask-dotnet/) | Durable Task ARM — schedulers, task hubs, retention policies. |

|

||||

| [azure-resource-manager-playwright-dotnet](.github/skills/azure-resource-manager-playwright-dotnet/) | Playwright Testing ARM — workspaces, quotas. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>Monitoring & Partner</strong> (3 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-mgmt-applicationinsights-dotnet](.github/skills/azure-mgmt-applicationinsights-dotnet/) | Application Insights — components, web tests, workbooks. |

|

||||

| [azure-mgmt-arizeaiobservabilityeval-dotnet](.github/skills/azure-mgmt-arizeaiobservabilityeval-dotnet/) | Arize AI — ML observability via Azure Marketplace. |

|

||||

| [azure-mgmt-mongodbatlas-dotnet](.github/skills/azure-mgmt-mongodbatlas-dotnet/) | MongoDB Atlas — manage Atlas orgs as Azure ARM resources. |

|

||||

|

||||

</details>

|

||||

|

||||

---

|

||||

|

||||

### TypeScript

|

||||

|

||||

> 24 skills • suffix: `-ts`

|

||||

|

||||

<details>

|

||||

<summary><strong>Foundry & AI</strong> (6 skills)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|

||||

| [azure-ai-contentsafety-ts](.github/skills/azure-ai-contentsafety-ts/) | Content Safety — moderate text/images, detect harmful content. |

|

||||

| [azure-ai-document-intelligence-ts](.github/skills/azure-ai-document-intelligence-ts/) | Document Intelligence — extract from invoices, receipts, IDs, forms. |

|

||||

| [azure-ai-projects-ts](.github/skills/azure-ai-projects-ts/) | AI Projects SDK — Foundry client, agents, connections, evals. |

|

||||

| [azure-ai-translation-ts](.github/skills/azure-ai-translation-ts/) | Translation — text translation, transliteration, document batch. |

|

||||

| [azure-ai-voicelive-ts](.github/skills/azure-ai-voicelive-ts/) | Voice Live — real-time voice AI with WebSocket, Node.js or browser. |

|

||||

| [azure-search-documents-ts](.github/skills/azure-search-documents-ts/) | AI Search — vector/hybrid search, semantic ranking, knowledge bases. |

|

||||

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><strong>M365</strong> (1 skill)</summary>

|

||||

|

||||

| Skill | Description |

|

||||

|-------|-------------|

|